Recently I’ve been spending a fair bit of effort working on Azure Kubernetes Service. I don’t think it really needs repeating, but AKS is an absolutely phenomenal product. You get all the excellence of the K8s platform, with a huge percentage of the overhead managed by Microsoft. I’m obviously biased as I spend most of my time on Azure, but I definitely find it easier than GKE & EKS. The main problem I have with AKS is cost. Not for production workloads or business operations, but for lab scenarios where I just want to test my manifests, helm charts or whatever. There’s definitely a lot of options for spinning up clusters on demand for lab scenarios or even reducing cost of an always present cluster; Terraform, Kind or even just right sizing/power management. I could definitely find a solution that fits within my current Azure budget. Never being one to take the easy option, I’ve taken a slightly different approach for my lab needs. A two node (soon to be four) Raspberry Pi Kubernetes cluster.

Besides just being cool, It’s great to have a permanent cluster available for personal projects, with the added bonus that my Azure credit is saved for more deserving work!

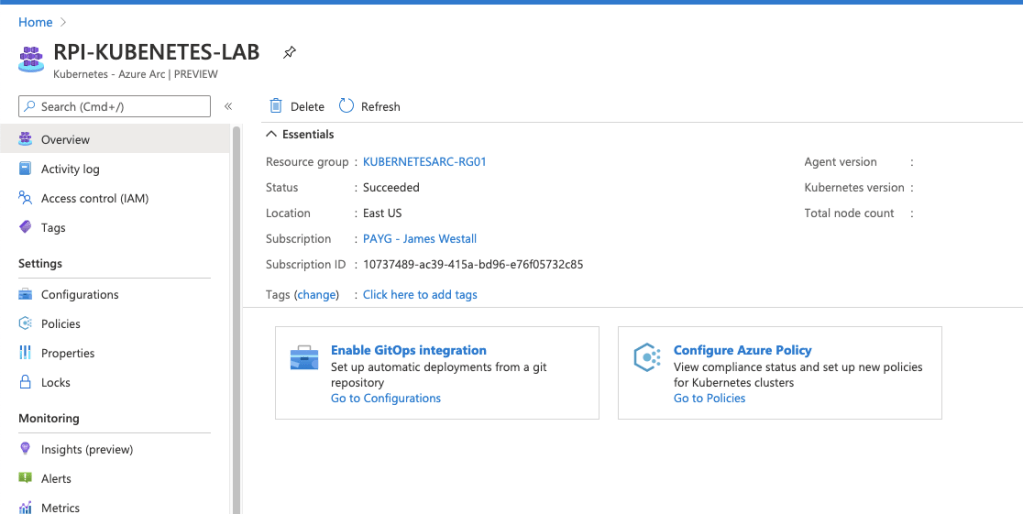

That’s all good and well I hear you saying, but I needed this cluster to lab AKS scenarios right? Microsoft has been slowly working to integrate “non AKS” Kubernetes into Azure in the form of ARC enabled clusters – Think of this almost as an Azure compete to Google Anthos, but with so much more. The reason? Arc doesn’t just cover the K8s platform and it brings a whole host of Azure capability right onto the cluster.

The setup

Configuring a connected ARC cluster is a monumentally simple task for clusters which meet muster. Two steps to be exact.

1. Enable the preview azure cli extensions

az extension add --name connectedk8s

az extension add --name k8sconfiguration

2. Run the CLI commands to enable an ARC enabled cluster

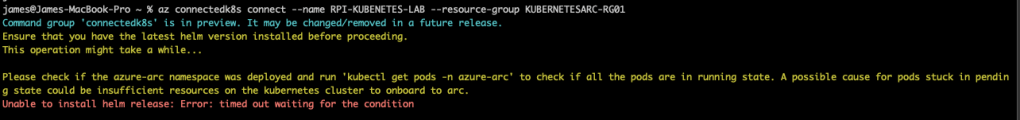

az connectedk8s connect --name RPI-KUBENETES-LAB --resource-group KUBERNETESARC-RG01

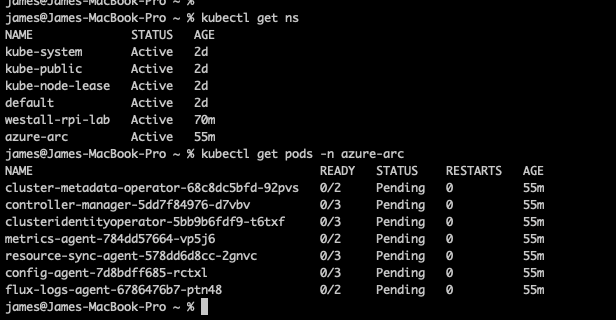

In the case of my Raspberry Pi cluster – arm64 architecture really doesn’t cut it. Shortly after you run your commands you will receive a timeout and discover pods stuck in a pending state.

Digging into the deployments, it quickly becomes obvious that an amd64 architecture is really needed to make this work. Pods are scheduled across the board with a node selector. Removing this causes a whole host of issues related to what looks like both container compilation & software architecture. For now it looks like I might be stuck with a tantalising object in Azure & a local cluster for testing. I’ve become a victim of my own difficult tendencies!

Right for you?

There is a lot of reasons to run your own cluster – Generally speaking, if you’re doing so you will be pretty comfortable with the Kubernetes ecosystem as a whole. This will just be “another tool” you could add, and you should apply the same consideration for every other service you consider using. In my opinion the biggest benefit of the service is the simplified/centralised management plane across multiple clusters. This allows me to manage my own (albeit short lived) AKS clusters and my desk cluster with centralised policy enforcement, RBAC & monitoring. I would probably look to implement if I was running my datacenter cluster, and definitely if I was looking to migrate to AKS in the future. If you are considering, keep in mind a few caveats;

- The Arc Service is still in preview – expect a few bumps as the service grows

- Currently only available in EastUS & WestEurope – You might be stuck for now if operating under data residency requirements.

At this point in time, I’ll content myself with local cluster. Perhaps I’ll publish a future blog post if I manage to work through all these architecture issues. Until next time, stay cloudy!