Recently while working on a code uplift project with a customer, I wanted a simple way to analyse our Advanced Security results. While the Github UI provides easy methods to do basic analysis and prioritisation, we wanted to complete our reporting and detailed planning off platform. This post will cover the basic steps we followed to export GitHub Advanced Security results to a readable format!

Available Advanced Security API Endpoints

GitHub provides a few API endpoints for Code Scanning which are important for this process, with the following used today:

This post will use PowerShell as our primary export tool, but reading the GitHub documentation carefully should get you going in your language or tool of choice!

Required Authorisation

As a rule, all GitHub API calls should be authenticated. While you can implement a GitHub application for this process, the easiest way is to use an authorised Personal Access Token (PAT) for each API call.

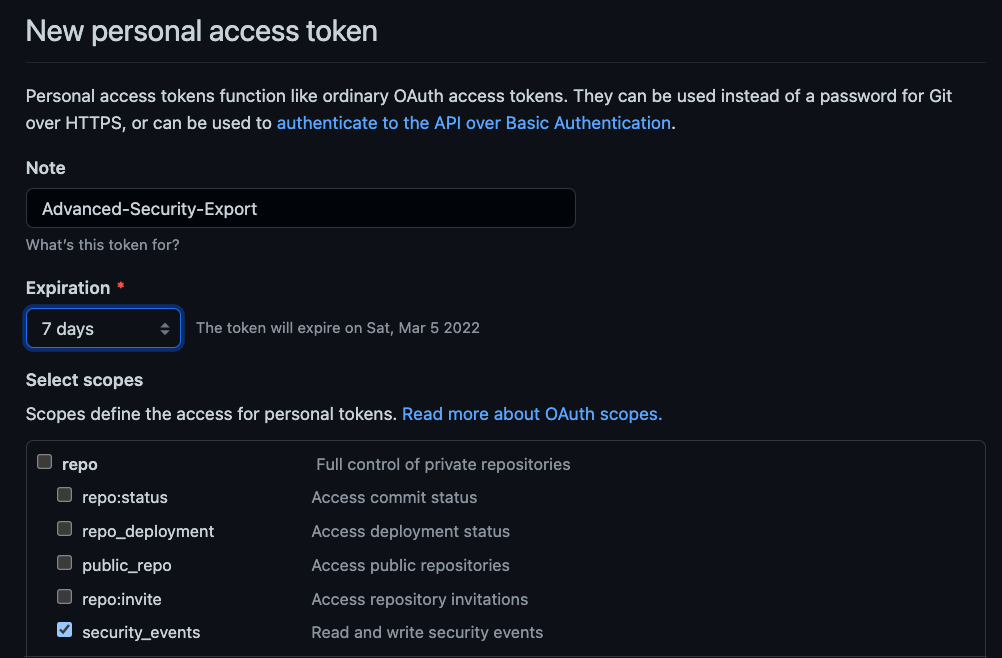

To do create a PAT, navigate to your account settings, and then to Developer Settings and Personal Access Tokens. Exporting Advanced Security results requires the security_events scope, shown below.

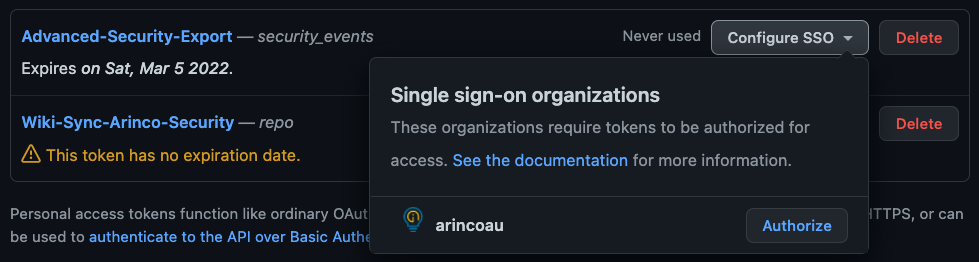

Note: Organisations which enforce SSO will require a secondary step where you log into your identity provider, like so:

Now that we have a PAT, we need to build the basic authorisation API headers as per the GitHub documentation.

$GITHUB_USERNAME = "james-westall_demo-org"

$GITHUB_ACCESS_TOKEN = "supersecurepersonalaccesstoken"

$credential = "${GITHUB_USERNAME}:${GITHUB_ACCESS_TOKEN}"

$bytes = [System.Text.Encoding]::ASCII.GetBytes($credential)

$base64 = [System.Convert]::ToBase64String($bytes)

$basicAuthValue = "Basic $base64"

$headers = @{ Authorization = $basicAuthValue }Exporting Advanced Security results for a single repository

Once we have an appropriately configured auth header, calling the API to retreive results is really simple! Set your values for API endpoint, organisation and repo and you’re ready to go!

$HOST_NAME = "api.github.com"

$GITHUB_OWNER = "demo-org"

$GITHUB_REPO = "demo-repo"

$response = Invoke-RestMethod -FollowRelLink -Method Get -UseBasicParsing -Headers $headers -Uri https://$HOST_NAME/repos/$GITHUB_OWNER/$GITHUB_REPO/code-scanning/alerts

$finalResult += $response | %{$_}

The above code is pretty straight forward, with the URL being built by providing the “owner” and repo name. One thing we found a little unclear in the doco was who the owner is. For a personal public repo this is obvious, but for our Github EMU deployment we had to set this as the organisation instead of the creating user.

Once we have a URI, we call the API endpoint with our auth headers for a standard REST response. Finally, we parse the result to a nicer object format (due to the way Invoke-RestMethod -FollowRelLink parameter works).

The outcome we quickly achieve using the above is a PowerShell object which can be exported to parsable JSON or CSV formats!

Exporting Advanced Security results for an entire organisation

Depending on the scope of your analysis, you might want to export all the results for your GitHub organisation – This is possible, however it does require elevated access, being that your account is an administrator or security administrator for the org.

$HOST_NAME = "api.github.com"

$GITHUB_ORG = "demo-org"

$response = Invoke-RestMethod -FollowRelLink -Method Get -UseBasicParsing -Headers $headers -Uri https://$HOST_NAME/orgs/$GITHUB_ORG/code-scanning/alerts

$finalResult += $response | %{$_}