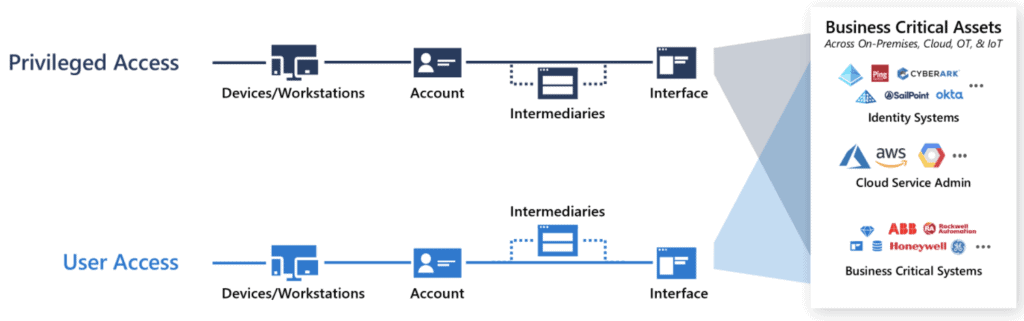

I’ve been approached a few times recently on how best to govern and secure Privileged Access using the Microsoft stack. Often this conversation is with organizations who don’t have the need, budget or skillset to deploy a dedicated solution such as those from CyberArk, BeyondTrust or Thycotic. Understanding this, these organizations are looking to uplift security, but are pragmatic about doing it within the ecosystem they know and can manage. This series will focus on getting the most of out Azure AD, challenging your thinking on Azure AD capabilities and using the Microsoft ecosystem to extend into hybrid environments!

What is Privileged Access?

Before we dive too deep into the topic, it’s important to understand what exactly is privileged access? Personally, I believe that a lot of organizations look at this in the wrong light. The simplest way to expand your understanding is by asking two questions.

- If someone unauthorized to see or use my solution/data had the ability to do so, would the impact to my business be negative?

- If the above occurred, how bad would it be?

The first question really focuses on the core of privileged access – It is a special right you grant your employees and partners, with the implicit trust it won’t be abused in a negative way. Using this question is good because it doesn’t just focus on administrative access – A pitfall which many organizations fall into. It also brings specialized access into scope. Question two is all about prioritizing the risk associated with each of your solutions – Understanding that intentional leakage of the organizational crown jewels is more important than someone who can access a server will often allow you to be pragmatic with your focus in the early stages of your journey.

Building a Strategy

Understanding your strategy for securing privileged access is a critical task and it should most definitely be distinct from any planning activities. Privileged access strategy is all about defining where to exert your effort over the course of your program. Having a short term work effort, aligned to a long term light on the hill ensures that your PAM project doesn’t revisit covered ground.

To do this well, start by building an understanding of where your capabilities exist. Something as simple as location is adequate. For example, I might begin with; Azure Melbourne, Azure Sydney, Canberra datacenter and Unknown (SaaS & everything else).

From that initial understanding, you can begin to build out some detail, aligned to services or data. If you have a CASB service like Cloud App Security enabled, this can be really good tool to gain insights on what is used within in your environment. Following this approach, our location based data suddenly expands to; Azure IaaS/PaaS resources, Azure Control Plane, SaaS application X, Data Platform (Storage Accounts) and Palo Alto Firewalls.

This list of services & data can then be used to build a list of access which users have against each service. For IaaS/PaaS and SaaS app X, we have standard users and administrators. ARM and Data platform overlaps for admin access, but data platform also has user access. Our networking admins have access to the Palo Alto devices, but this service is transparent to our users.

Finally, build a matrix of impact, using risks to the identity & likelihood of occurrence. Use this data to prioritize where you will exert your effort. For example; A breach of my SaaS administrator account for a region isn’t too dangerous, because I’ve applied a zero trust network architecture. You cannot access customer data or another region from the service in question. I’ll move that access down in my strategy. My users with access to extremely business sensitive data commonly click phishing emails. I’ll move that access up in my strategy.

Source: Twitter

This exercise is really important, because we have begun to build our understanding of where the value is. Based on this, a short PAM strategy could be summarized into something like so;

- Apply standard controls for all privileged users, decreasing the risk of account breach.

- Manage administrative Accounts controlling identity, ensuring that access is appropriate, time bound and audited.

- Manage user accounts with access to key data, ensuring that key access is appropriate, reviewed regularly and monitored for misuse.

- Manage administrative Accounts controlling infrastructure with key data.

- Apply advanced controls to all privileged users, enhancing the business process aligned to this access.

- Manage administrative accounts with access to isolated company data (no access from service to services).

My overarching light on the hill for all of this could be summarized as: “Secure my assets, with a focus on business critical data enhancing the security of ALL assets in my organization”

Planning your Solutions

After you have developed your strategy, it’s important to build a plan on how to implement each strategic goal. This is really focused on each building block you want to apply and the technology choices you are going to make. Notice how the above strategy did not focus on how we were going to achieve each item. My favourite bit about this process is; Everything overlaps! Developing good controls in one area, will help secure another area, because identity controls generally cover all the user base!

The easiest way to plan solutions is to build out a controls matrix for each strategic goal. As an example,

Apply Standard Controls for all privileged users

Could very quickly be mapped out to the following basic controls:

| Solution | Control | Purpose |

|---|---|---|

| Conditional Access | Multi-Factor Authentication | Works to prevent password spray, brute force and phishing attacks. High quality MFA design combined with attentive users can prevent 99.9% of identity based attacks. |

| Conditional Access | Sign In Geo Blocking | Administration should be completed only from our home country. Force this behaviour by blocking access from other locations. |

| Azure AD Password Protection | Password Policy | While we hope that our administrators don’t use Summer2021 as a password, We can sleep easy knowing this will be prevented by a technical control. |

These control mappings can be as complex or as simple as needed. As a general recommendation, starting small will allow you to aggressively achieve high coverage early. From there you can re-cover the same area with deeper and advanced controls over time. Rinse and repeat this process for each of your strategic goals. You should quickly find that you have a solution for the entire strategy you developed!

Up Next

If you’ve stuck with me for this long, thank-you! Securing Privileged Access really is a critical process for any cyber security program . Hopefully you’re beginning to see some value in really expanding out a strategy and planning phase for your next privileged access management project. Over the next few posts, I’ll elaborate on what can be done using Azure AD, and some tips and techniques to help you stay in control. Topics we will cover are:

- Strategy & Planning (This Post)

- Azure AD Basics

- Hybrid Scenarios

- Zero Trust

- Protecting Identity

- Staying in Control

Until next time, stay cloudy!

Originally Posted on arinco.com.au