As a consultant who spends large amounts of time implementing customer solutions, automation has become a key part of my job. A technology that can be automated is instantly more attractive for me to use. This is one of the key reasons that I love Terraform. It enables me to write cross platform automation and when automation isn’t natively supported, I can write a custom provider. That’s why the recent announcement of a custom Terraform provider for Okta is my favorite feature announcement of 2019 and why I’ll be covering the basics of Okta & Terraform in this blog. If you’re not sure how to use Terraform, have a look here for an initial overview, otherwise let’s dive in!

Setting up – Building the provider and API key generation.

The first thing you’ll need to do is build the Okta provider. This isn’t too hard; however, the GitHub readme is written for Unix users. If you’re on Windows like me, you will need to have a basic understanding of how to compile Go and how to use custom providers in terraform.

First step, clone the Git repo and CD in.

Next, build the Provider, note that if you try run the generated EXE, you’ll be prompted that the file is a plugin. To stick with the Terraform documentation, I’ve used the following EXE naming format: terraform-provider-PRODUCT-vX.Y.Z.exe

Finally, copy the provider into the Terraform plugin path. For 64 bit windows, this is generally: %APPDATA%\terraform.d\plugins\windows_amd64

Now that we have a functional provider for Terraform, its time to generate an API key. Please be careful with these keys. as they inherit your Okta permissions and shouldn’t be left lying around!

Start in the Okta portal, navigate to Security and then API. You should find the following button on the top left.

Fill in a token name – If you’re using this in production, generally keep some data about the token usage here!

Insert your freshly generated API token into the following Terraform HCL!

provider "okta" {

org_name = "demoorg"

api_token = "API TOKEN HERE"

base_url = "okta.com"

}

Building resources

Now that we have a provider configured, lets create some resources. Thankfully, the provider developers (articulate) have detailed out common use cases for the provider here. Creating a user isn’t too difficult, provided you have the four mandatory fields handy:

resource "okta_user" "XelloDemo" {

first_name = "Xello"

last_name = "Okta Terraform"

login = "Xello.OktaTerraform@xellolabs.com"

email = "Xello.OktaTerraform@xellolabs.com"

status = "STAGED"

}

Terraform Init will begin the deploy process

And Terraform apply to deploy!

Now deploying a user isn’t too imaginative or special. After all, you can easily bulk import from a CSV and scripting out API creation isn’t too hard. This is where the power of articulates provider comes in – It currently supports the majority of the Okta API, and the public can add support where possible. Let’s look at something a bit more advanced.

resource "okta_user" "AWSUser1" {

first_name = "XelloLab"

last_name = "AWSDemo"

login = "XelloLab.AWSDemo@xellolabs.com"

email = "XelloLab.AWSDemo@xellolabs.com"

status = "ACTIVE"

}

resource "okta_group" "AWSGroup" {

name = "AWS Assigned Users"

description = "This group was deployed by Terraform"

users = [

"${okta_user.AWSUser1.id}"

]

}

resource "okta_app_saml" "test" {

preconfigured_app = "amazon_aws"

label = "AWS - Terraform Deployed"

groups = ["${okta_group.AWSGroup.id}"]

users {

id = "${okta_user.AWSUser1.id}"

username = "${okta_user.AWSUser1.email}"

}

}

In the above Terraform code, we have a user being configured, assigned to a group, and then assigned into an Okta integration network application. Rather than clicking through the Okta portal, I’ve relied on infrastructure as code to deploy all the resources. I can even begin to combine providers to deliver cross platform integration – This snippet will work nicely with the AWS identity provider, enabling me to neatly configure the SAML integration between the two services, without leaving my shell or pipeline. The end result? No click ops, just code and the AWS application configured in a matter of minutes!

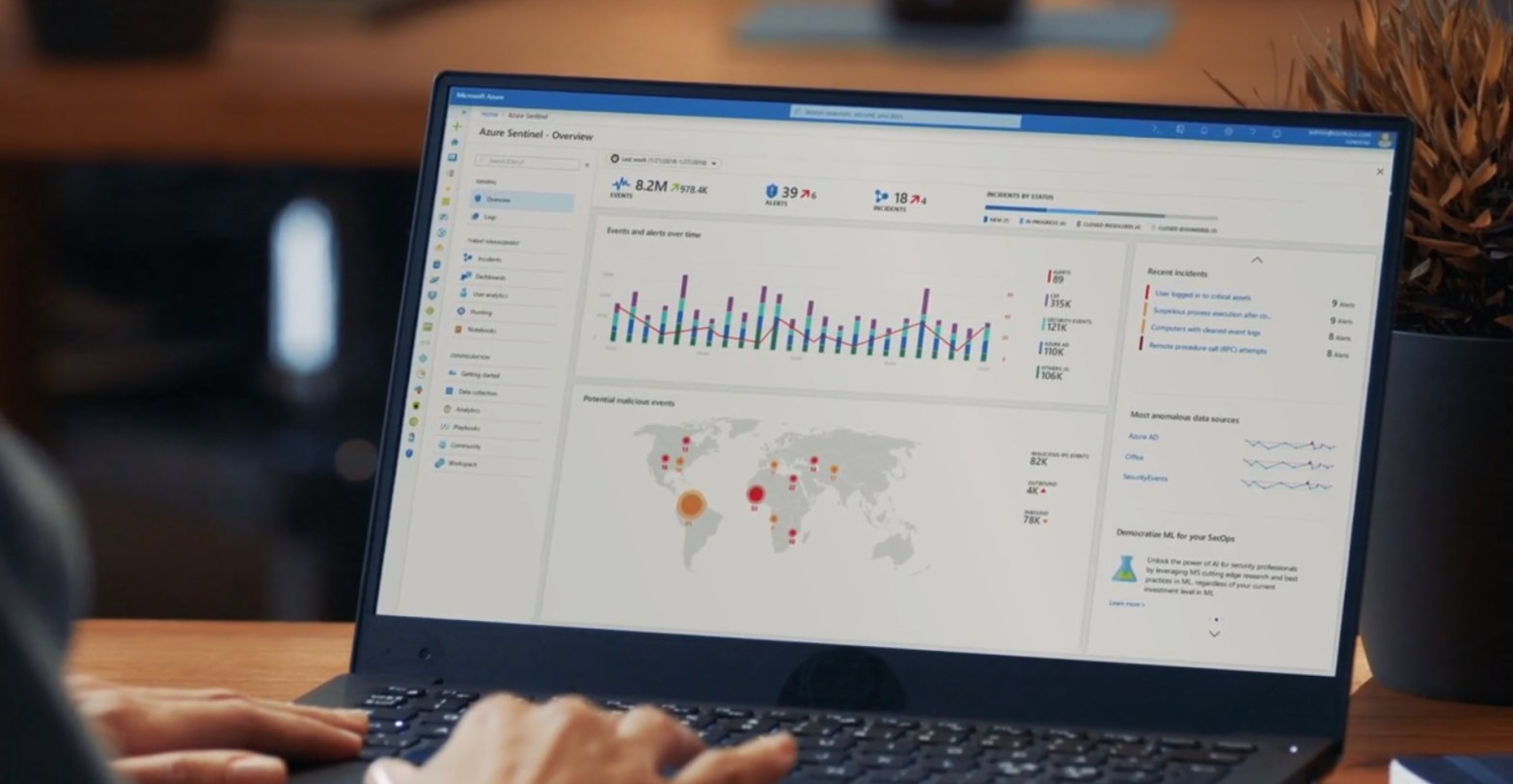

Fast and Furious – Okta Drift

One of the things that Terraform is really excellent for is minimizing configuration drift. Regularly running Terraform apply, either from your laptop or a CICD pipeline, can ensure that applications are maintained as documented and deployed. In the below example you can see Terraform correcting an undesired application update.

You shouldn’t have to worry about an overeager intern destroying your application setup, and the Okta/Terraform combo prevents this!

Cleaning up

The other super useful thing with using Terraform is the cleanup process. I’ve lost count of how many times I’ve clicked through a portal or navigated to an API doc just to bulk delete resources. Okta users Immediately come to mind for this problem! By running Terraform destroy, I can immediately clean up my environment. Great for testing out new functionality or lab scenarios.

Hopefully by now you’re beginning to understand what some of the options will be when configuring Okta with Terraform. For my day to day work as a consultant, this is an excellent integration and the varied cross platform use cases are nearly limitless. As always, for any questions please feel free to reach out to myself of the team!