If you’ve been keeping score at home, the Azure AI platform has had more name changes than a witness protection program. Azure AI Studio became Azure AI Foundry at Ignite 2024, and then, barely six months later at Build 2025, Microsoft dropped the “Azure” entirely and rebranded the whole thing to Microsoft Foundry. I’ll admit my first reaction was “here we go again.” But this time it’s not just a name change. Microsoft has genuinely consolidated the platform underneath, and the result is the most significant simplification of the Azure AI developer experience since… well, since they first launched Azure OpenAI Service.

This post will walk through the rebrand lineage (because keeping track of the names alone can be daunting), what actually changed architecturally, what the migration path looks like for existing Azure OpenAI users, and what’s still hanging around in “Foundry Classic.” Let’s dive in!

The Name Game: A Brief History

Let me save you some confusion with a quick timeline:

- Azure AI Studio (2023): The original portal for working with Azure OpenAI models, prompt engineering, and fine-tuning.

- Azure AI Foundry (Ignite 2024, November): Rebranded and expanded to include hubs, projects, and a broader set of AI services beyond just OpenAI models.

- Microsoft Foundry (Build 2025, May 19): The big one. Not just a rebrand but a genuine platform consolidation. Azure OpenAI, Azure AI Services, and the old hub-based project model all fold into a single unified resource.

The important bit is that last step. Microsoft didn’t just change the logo. They created a new resource type, unified the APIs, consolidated the SDKs, and redesigned how projects, endpoints, and access control work.

What Actually Changed (Architecturally)

Here’s the before-and-after, because this is where it gets genuinely interesting:

| Dimension | Before | After |

|---|---|---|

| Resource model | Hub + Azure OpenAI resource + AI Services (separate resources) | Single Foundry resource with projects |

| Endpoints | 5+ separate service endpoints | One project endpoint |

| API versioning | Monthly api-version query parameters |

Stable v1 routes (/openai/v1/) |

| SDKs | Multiple packages (Azure.AI.Inference, Azure.AI.Generative, Azure.AI.ML, AzureOpenAIClient) |

Unified Azure.AI.Projects + standard OpenAI NuGet package |

| Agent API | Assistants API (Threads, Runs, Messages) | Responses API (Conversations, Items, Responses) |

| Portal | Foundry Classic | New Foundry portal at ai.azure.com |

In my opinion, the single biggest win here is the resource consolidation. Previously, building an agent that needed an OpenAI model, a search index, and content safety required three separate Azure resources, each with their own endpoint, RBAC, and networking config. Now it’s one Foundry resource with one project endpoint. The reduction in operational overhead is substantial.

The v1 API Routes: No More api-version Madness

If you’ve worked with Azure OpenAI, you know the pain of api-version query parameters. Every few months a new preview version drops, features move between versions, and you end up with code littered with version strings like 2024-08-01-preview that you’re never quite sure are current.

Microsoft Foundry introduces stable v1 routes. Instead of:

https://my-resource.openai.azure.com/openai/deployments/gpt-4o/chat/completions?api-version=2024-08-01-previewYou now use:

https://my-resource.openai.azure.com/openai/v1/responsesThis means you can use the standard OpenAIResponseClient from the OpenAI NuGet package (not the Azure-specific AzureOpenAIClient wrapper) pointed directly at your Azure resource:

// Install packages:

// dotnet add package OpenAI

// dotnet add package Azure.Identity

using OpenAI;

using OpenAI.Responses;

using System.ClientModel.Primitives;

using Azure.Identity;

#pragma warning disable OPENAI001

BearerTokenPolicy tokenPolicy = new(

new DefaultAzureCredential(),

"https://ai.azure.com/.default");

OpenAIResponseClient client = new(

model: "gpt-4.1",

authenticationPolicy: tokenPolicy,

options: new OpenAIClientOptions()

{

Endpoint = new Uri("https://YOUR-RESOURCE-NAME.openai.azure.com/openai/v1")

});

OpenAIResponse response = await client.CreateResponseAsync("What is Microsoft Foundry?");

Console.WriteLine(response.GetOutputText());This is brilliant for portability. The same client code works against OpenAI directly or Azure OpenAI with just an endpoint swap. No more Azure-specific SDK wrappers or version parameter juggling.

One-Click Upgrade from Azure OpenAI

For existing Azure OpenAI users (which, let’s be honest, is most of us), the migration story is pretty straight forward. Microsoft offers a one-click upgrade that converts your Azure OpenAI resource into a Foundry resource while preserving:

- Your existing endpoint URL

- All API keys

- Deployed models and their configurations

- Existing state and data

After the upgrade, you get a Foundry resource with a default project. Your old code keeps working because the endpoint is preserved. You can then start using the new v1 routes and the Responses API at your own pace.

Be warned: The upgrade is one-way. Once you convert to a Foundry resource, you can’t go back to a standalone Azure OpenAI resource. In my opinion, that’s fine since there’s no feature regression, but it’s worth knowing before you click the button.

The Unified SDK: Azure.AI.Projects

The old SDK story was, to put it politely, fragmented. Depending on what you were doing, you might need Azure.AI.Inference, Azure.AI.Generative, Azure.AI.ML, or the OpenAI package with the AzureOpenAIClient wrapper. Each had slightly different authentication patterns and endpoint requirements.

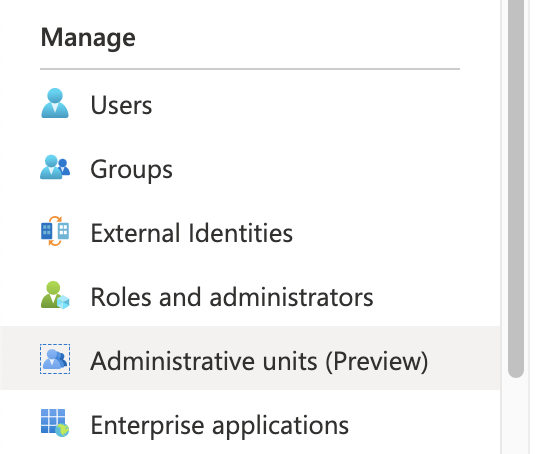

The Azure.AI.Projects SDK consolidates this into a single project client. You authenticate once, and you get access to models, agents, evaluations, tracing, and tools through one interface.

For inference specifically, you can use the standard OpenAIResponseClient against the v1 routes (as shown above). For platform operations like creating agents, running evaluations, or configuring tools, you use the AIProjectClient. Two NuGet packages, clear separation of concerns.

Foundry Classic: What Stays Behind

Not everything has moved to the new portal yet. Microsoft is maintaining the Foundry Classic portal for scenarios that aren’t yet supported in the new experience:

- Standalone Azure OpenAI resources not connected to a Foundry project

- Assistants v1 creation and authoring

- Audio playground

- AI service fine-tuning

- Content Understanding (moving to new portal soon)

- Hub-based project workflows

If you’re using any of these, you’ll need to keep working in Classic for now. The good news is that Classic isn’t going away imminently, and Microsoft is actively migrating features across. The roadmap is to eventually have everything in the new portal.

What’s GA vs Preview

This is important for production workloads. At the time of writing, here’s the high-level readiness:

- GA (production-ready): Model discovery and deployment, agent development (Agents v2), evaluations, fine-tuning, red teaming, RBAC, quota management, speech playgrounds

- Preview (not yet production-ready): Workflows, tracing, monitoring, memory, guardrails for agents, knowledge, AI Gateway

If you’re running production workloads, I’d strongly recommend sticking to GA features and using the Foundry GA guide to validate your feature requirements before committing.

Who Is This Actually For?

Microsoft positions Foundry for three audiences, and I think this framing is spot on:

- Application developers building AI-powered products with agents, models, and tools. This is the primary audience, and the portal’s “Discover, Build, Operate” flow reflects this.

- ML engineers and data scientists who fine-tune models, run evaluations, and manage deployments.

- Platform engineers and IT admins who need to govern AI resources, enforce policies, and manage access across teams.

If you’re in that first group (and I suspect most readers of this blog are), the simplification from multiple resources and SDKs down to one Foundry resource and two packages is a genuine quality-of-life improvement.

Wrapping Up

Microsoft Foundry is not just another rebrand. It’s a genuine architectural consolidation that simplifies how we build, deploy, and manage AI applications on Azure. The unified resource model, stable v1 API routes, consolidated SDK, and one-click upgrade path all point to a platform that’s maturing rapidly.

Is it perfect? No. There are still features stuck in Classic, preview capabilities that aren’t production-ready, and the name changes have been genuinely confusing. But the direction is right, and for anyone starting fresh or planning a migration, Foundry is clearly where Microsoft wants you to be.

As always, feel free to reach out with any questions or comments!

Until next time, stay cloudy!